The Four-Phase Breakdown Cycle

Where it starts to go wrong

The moment rarely announces itself. It usually sounds like a passing comment in a review meeting: "This doesn't really sound like us."

Most teams experiencing this assume they have a people problem — someone needs better prompts, the contractor needs more onboarding, the new hire hasn't absorbed the voice yet. The diagnosis makes sense at the symptom level.

But the cause is structural: no shared, persistent organizational context exists for the AI tools producing all this content. Each person reconstructs that context from memory on every interaction, and every reconstruction is slightly different.

This breakdown follows a recognizable pattern. We've watched it play out across organizations of different sizes, sectors, and AI maturity levels. Most can locate themselves in the cycle once the phases are named.

The early period — when everything seems to work

The organization starts using AI tools. A few team members draft blog posts, grant narratives, social copy, or internal communications with ChatGPT, Claude, or something comparable. The early output is impressive. Content that used to take hours takes minutes. Volume goes up. Leadership is encouraged.

Nothing has gone visibly wrong, so nobody questions the approach. The AI-generated content is competent — it reads well, it's structured cleanly, and if it doesn't sound exactly like the organization, it's close enough. The speed gains feel significant.

This period works as long as volume stays low, the team stays small, and consistency requirements are minimal. One or two people prompting the same tool with similar context produce roughly similar output. The structural problem is already present. It just isn't visible yet.

Phase 1 — Growth pressure exposes the gap

The team grows. Content volume increases. More people start prompting AI tools — a new hire, a contractor, a board member drafting their own communications. The conditions that made the early period work no longer hold.

The CEO's AI-assisted newsletter sounds different from the program director's grant report. A contractor uses language the organization moved past two years ago. Two people describe the same program differently because each rebuilt the context from their own memory of what the organization sounds like.

These inconsistencies get attributed to the individuals producing them. And at the surface, that's fair — those people are producing inconsistent output. But the cause isn't their skill level. It's that no documented organizational intelligence exists for them to work from. Each person is doing their best approximation of the organization's voice, positioning, and evidence standards, reassembled fresh on every interaction with every tool.

If the team is small enough and the stakes are low enough, Phase 1 feels like a manageable annoyance. An extra round of edits. A conversation about tone. It becomes unmanageable when the volume, the team size, or the stakeholder scrutiny increases — which, for growing organizations, happens on a predictable timeline.

This pressure is accelerating. According to Anthropic's March 2026 Economic Index report, AI use cases are diversifying rapidly — the concentration of tasks is declining as adoption broadens beyond early technical users into a wider range of work. That's the macro version of what Phase 1 looks like inside an organization: more people, more use cases, more content, and no shared infrastructure holding it together.

Phase 2 — The breakdown compounds

The inconsistencies from Phase 1 stop being occasional and start being structural.

Voice inconsistency becomes the norm. AI-generated content across the organization sounds generically competent but not recognizably like the organization. The voice that stakeholders and funders associated with the organization — the thing that made its communications feel like them — has diluted. Different content channels start sounding like different organizations.

Positioning drifts. Without documented constraints on what the organization does and doesn't say, AI tools default to patterns from their training data. Industry clichés appear. Competitor vocabulary creeps in. Language that contradicts the organization's positioning — a term it specifically avoids, a framing its sector has moved past — shows up in published content because nothing in the production workflow filtered it out.

Claims go unverified. AI tools generate assertions the organization can't substantiate. A draft grant proposal references an outcome the program hasn't measured. A blog post implies a partnership that hasn't been formalized. Without a documented inventory of what the organization can and cannot claim, AI output includes plausible-sounding statements that nobody catches until a stakeholder challenges them.

Revision cycles increase. We've seen cycles run to five or more rounds when AI operates without systematic constraints. Each round requires someone who carries organizational knowledge in their head to identify what's wrong, explain the correction, and verify the fix. The efficiency gains from the early period get consumed by the cost of correction.

Quality control stays reactive. Problems get identified after drafts are complete — or after publication. There's no systematic mechanism for catching forbidden language, unverified claims, or voice inconsistency before they appear in a draft. The organization is debugging content one piece at a time instead of preventing the errors structurally.

The team usually knows something is wrong at this stage. The language they use to describe it is revealing: "We need better writers." "We need to train the team on AI." "Our contractor isn't getting the voice."

The diagnosis stays at the individual level because the structural cause — missing infrastructure — doesn't have a name yet.

Phase 3 — Crisis

The structural problem becomes a credibility problem.

Phase 3 rarely arrives as a single event. It's the accumulation of Phase 2 failures reaching an audience that holds the organization accountable — a funder, a regulator, a journalist, a board member. The cost at this point isn't revision time. It's institutional credibility.

Stakeholder confidence depends on consistency — that the organization says the same things, in the same voice, with verifiable claims, across every channel and every person. When that consistency fails visibly, the damage is to trust.

What the cycle reveals

The four-phase cycle isn't a story about AI tools failing. AI tools perform exactly as designed — they produce the most probable output given the input they receive. The problem is that most organizations provide no persistent input beyond the immediate prompt.

No documented voice architecture. No positioning constraints. No evidence standards. No record of what language the organization does not use.

Better prompts improve individual sessions but don't persist across them. Style guides describe preferences but aren't structured as constraints an AI tool can execute against. More tools multiply the problem — each one starts from the same zero.

The same Anthropic research found that users with six or more months of experience showed measurably higher success rates in their AI interactions — not because they brought easier tasks, but because they had developed systematic habits and strategies for working with the tool.

The experienced users collaborated more, delegated less, and iterated more carefully. The gap between effective and ineffective AI use isn't about the tool. It's about the infrastructure — whether individual or organizational — that the user brings to the interaction.

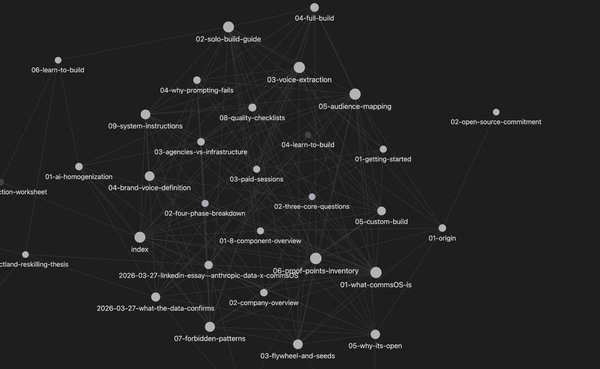

What's missing is the persistent knowledge layer that loads before any prompt and constrains AI output systematically — encoding how the organization communicates, what it can claim, and which voice writes in which context. That layer is the structural response to a structural problem.